In a bold administrative move, European Union (EU) lawmakers have recently proposed laws that limit the use of high-risk artificial intelligence (AI) applications. If this legislation is enacted, it will be the first of its kind and will affect businesses across industries in Europe.

Artificial intelligence technology has powered many modern, innovative business solutions implemented by successful European companies. Though the EU has made exceptions to this artificial intelligence crackdown, it will likely negatively impact AI’s emerging force over the European economy.

What the Legislation Aims to Do

One of the key driving factors of the legislation is to curb what the European parliament deems to be the intrusive aspects of AI surveillance - like the powerful facial recognition technology (which combines video surveillance cameras, computer vision, and predictive imaging) which some feel poses a threat to citizens' privacy. According to Melissa Heikkila from Politico:

The European Union doesn't want to leave powerful tech companies to their own devices like in the U.S., nor does it want to go the Chinese way by harnessing the tech to fashion a surveillance state. Instead, the bloc says it wants a "human-centric" approach that both boosts the tech, but also keeps it from threatening its strict privacy laws.

Furthermore, the EU wants to reduce the use of technology that some legislators believe can manipulate people’s opinions, entice them to take certain decisions, and exploit people’s vulnerabilities. It also wants to eliminate racial biases, which can be magnified by AI surveillance.

The consequences of violating this legislation in the EU will be harsh. Companies found in violation could be fined up to €20 million or 4% of their annual turnover.

Areas of Exemption

EU authorities continue to maintain their stance of wanting an amicable boundary in the use of AI, instead of an all-out crackdown. The lawmakers have allowed exemptions for matters relating to European security, such as the use of AI on CCTV cameras to investigate terrorism and criminal cases. Countries such as France are strongly in favor of AI surveillance. This is most likely a reaction to their long-standing battle against continued terror attacks. Other AI exemptions will be allowed for energy grid efficiency, climate change modeling, and streamlined manufacturing.

"The very definition of a ‘high-risk’ AI appliance is still up for debate."

The very definition of a ‘high-risk’ AI appliance is still up for debate. Many privacy experts believe this proposed legislation doesn't sort out the imbalance of power between the creator of the AI application and the subject.

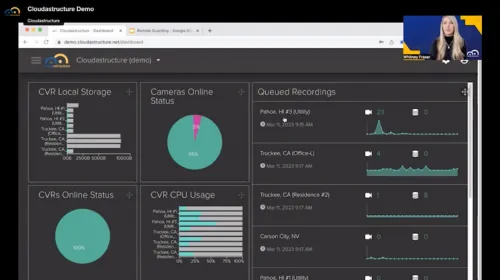

It is worth noting that Cloudastructure does not employ 'Big Brother' tactics in its video surveillance technology. Our industry has come under deserved and frequent criticism for the nearly indiscriminate use of video and facial recognition software in public spaces. Using AI- and machine learning software, Cloudastructure technology enables firms to be far more thoughtful and discerning about deploying video surveillance tools.

Cloudastructure places privacy first. This thinking drives our application software and how we operate and manage customer data in the cloud. We can blur faces, enable redaction, turn off facial recognition, and secure data in the cloud. Here is an example of how Cloudastructure has safely applied surveillance systems for a California university.

The Difficult Future with Restrictive AI Legislation in the EU

Cyber experts believe this impending law will negatively affect the technological development of European countries. The compliance burden on EU companies is expected to negatively impact the European economy, costing them an estimated €31 billion over the next five years. Reports also suggest that this will stifle innovation in the continent and affect the ability of AI security providers to expand across Europe.

European economies that depend on AI technology are likely to face a competitive disadvantage compared to other countries, such as the United States and China, where restrictions on AI technology do not exist. This latest move will force many companies to overlook Europe as a market for their AI businesses. The next few months before the legislation is voted on will be nerve-racking for companies that use AI surveillance.

.webp)

.webp)

.webp)

.png)

.webp)

.svg)

.svg)

.webp)

%20(1)-min.webp)